We wouldn’t be anywhere without our customers.

This isn’t just about keeping the lights on. It’s really about building a better video hosting platform for business by listening carefully to what our customers are telling us.

Gathering, analyzing, and implementing customer feedback is one of the most important activities a business can embark on. All too often, it’s left too late, or done as an afterthought.

Here’s how we moved from reactive, anecdotal user research to a methodical, customer-centric process.

What We Were Doing

It’s normal as companies grow for certain processes to evolve in less-than-desirable ways. We found ourselves in a situation where the size of our user base had doubled, but internal metrics and communication around customer feedback hadn’t kept pace.

We were listening to what our customers had to say, and were proud of the highly personal support we were providing.

However, the lack of structure around customer feedback meant that we had no insights into the frequency of feature requests we were getting, or where they should fall in terms of priorities. It also meant we were unlikely to be targeting the right points of improvement for our app, because a particularly vocal minority could potentially sway our decisions.

How We Fixed It

First, we had to identify what wasn’t working. We also had to determine what we wanted to get out of customer feedback much more specifically. Then, we had to work backwards to define the processes that would get us where we wanted to go.

To pinpoint the pain points in our current process, we had a company meeting to review everything pertaining to customer feedback. We looked at the tools we were using, the sources of feedback, and how we were processing it. We also looked at recent customer feedback, and traced the communication and associated outcome internally. This included instances where a solution was implemented immediately, as well as ones that were shelved.

We found specific types of negative feedback, such as when a feature wasn’t working as advertised, tended to be resolved very quickly. Generally speaking, that’s a good thing. Somewhat unsurprisingly, we also found the squeakiest wheels tended to get the most grease.

We struggled a lot more with feedback that didn’t include a direct request. For example, if someone described a use-case for video, and we didn’t quite have the features they had in mind, we didn’t always treat it as a feature request. We also realized we could do a lot better with follow up in instances where a solution was implemented.

Then, we brainstormed how we could do all of it in a way that would mean less feedback fell through the cracks, while ensuring we had our priorities straight. We needed a process that was in line with our product strategy, and directly informed by customer demand.

What We’re Doing Now

We changed quite a bit of how we handled customer feedback, and it’s still a work in progress. We strongly believe there’s always room for improvement.

That being said, we are in a much better place now.

Where Customer Feedback Comes From

This part we can’t really change, but it’s important to understand so you know what we are working with.

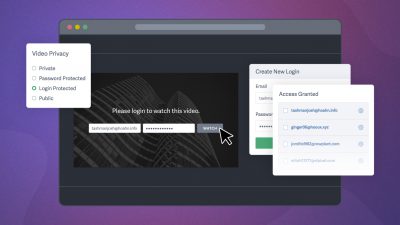

We get customer feedback primarily from people writing to our support team. Since SproutVideo is a highly stable product, with 99.998% uptime in the last several months, we don’t get many bug reports or serious issues of that nature.

Most of the time, our support team is handling pre-sales questions, and helping free trial users set up their new accounts to their specifications. Hidden in there are a lot of points of feedback about how people want our product to work, and how they’d like to be able to use it.

After email, live chat is a major source of customer feedback. Live chat has added benefits over email, because it enables us to ask questions in real-time, and get more precise feedback.

Social media is the final source of customer feedback, although not nearly as big for us as live chat or email.

Centralizing Feedback

An important change was to ensure all feedback was arriving in the same place, so we could standardize how it was handled. It also meant everyone who needed to review it would know where to find it.

Since social media was handled by a different team than customer support and success, we hadn’t previously routed messages received through social media to our support inbox. Doing so enabled us to treat all feedback the same way, no matter how it came in.

New Tools

One of the biggest gaps we identified through this process was the silent majority of our customers: people who never contacted us. We figured they still might have something to say, and looked for tools that would enable us to reach them.

A major win for us was FullStory. Using FullStory, we can review browsing sessions on our platform, both from the past and in real-time. When we’re considering changes to workflows within the app, or confused about what might have gone wrong for a particular user, FullStory is invaluable.

For example, say a free trial user uploaded a video to our platform, but didn’t activate their account. We can review how they were using our application to see if they searched for features we don’t offer, or encountered any difficulties using our app. If we notice a pattern, we flag it as an issue in Trello, and try to come up with solutions.

Better Outreach

Simply asking for feedback is a great way to get more of it. We always want to know what we could do better or differently. So, we started doing more outreach using email.

We targeted a few specific customer segments with this outreach; namely, new free trial users, free trial users who never activated their accounts, and people who wrote into support with a question, but never responded to our reply.

All new free trial users on our platform hear from their dedicated support rep shortly after they open their account. The aim is to understand their goals, and to help as needed. These conversations provide valuable information about how people would like to use our platform, and want to share video online in general.

For free trial users who never activated, we simply send a survey to try and get a sense for why it didn’t work out. The initial survey is automated, but, we do follow up personally depending on the response.

When we reach out about a past support ticket, sometimes we get a simple, “Yes, everything’s great now!” but other times, we might get another question. These efforts help ensure we’re learning as much as we can from each customer interaction, and providing the best support possible.

Tagging

Now that all our messages were in one place, and we were getting more information, we expanded tagging dramatically. We also standardized the tags we would use for different types of feedback. This helped not only with search, but also allowed us to analyze conversations in useful ways (more on that below).

Regular Reporting

We started circulating a company-wide email recapping customer communications on a weekly basis. This report includes a high level look at who was writing in to support, as well as a count of votes for different feature requests, potential pain points within the app, and bug reports. We also add a couple quotes from happy customers to keep things fun.

The Outcome

Our analysis of customer feedback is much more efficient than before. We are no longer over-reliant on anecdotes from recent conversations.

Looking at important trends in customer feedback on a regular basis enables us to easily determine what we should be working on next. Project planning has shifted to be truly customer-centric.

We also feel more in touch with important evolutions in consumer expectations. If a new feature is suddenly in demand, we’re sure to hear about it.

Finally, we’re much more coordinated internally. Rather than playing catch up with customer feedback, the whole team is up to speed and on the same page.

Naturally, this is a process, and we still have a lot to learn about how best to handle customer feedback. We’re all ears if you have any pointers! Please share in the comments below.